Integration testing is a crucial part of ensuring the smooth operation of complex software systems.

Integration testing is a crucial part of ensuring the smooth operation of complex software systems. Instead of focusing on individual parts, integration tests examine how different components, like classes, modules, binaries, systems, or even entire products, work together.

The purpose of integration tests

Integration tests are like the conductor of the orchestra, making sure all the instruments play in harmony. While unit tests assess individual musical notes, integration tests look at the bigger picture, ensuring that multiple systems interact smoothly.

This testing typically involves checking the main program or the part of the software that interacts directly with users or external systems. Unlike unit tests, integration tests may have specific requirements, like who can perform them, where they can be executed, or when they can run.

Usually, this involves testing the main executable with your changes and is user-facing or external system-facing.

Downsides of Integration Testing

Integration testing is not always the best tool for the job. In fact, they can produce some distinct disadvantages:

- flakier and require more infrastructure than unit tests

- slower both to set up and run

- not scalable because of the resource and time requirements as well as the lack of isolation

- unclear ownership because the test may span multiple units

- lack of standardization large variance, often platform based instead of standards based on the language.

Key components

System Under Test (SUT)

The system or application that your integration test is meant to run in. Think of this as the container or environment for your test. Integration tests are ideally suited to make sure your application works in a variety of environments and system configurations. You can use things like docker to provide this functionality.

Necessary Test Data

Before you can start the test, you need to initialize the environment and application under test with an initial configuration. If you’re testing a command line application, this can look like a set of flags or a unique file system configuration.

Behavior verification

After the System Under Test (SUT) is running with the Necessary Test Data, you must be able to verify if the system is performing as expected. There are two main approaches for this verification:

Assertion-based verification

Much like with unit tests, assertions are explicit checks about the intended behavior or outcome of the system.

A/B Comparison Verification

Instead of defining explicit assertions, A/B testing involves running two copies of the SUT, sending the same data, and comparing the output. The intended behavior is not explicitly defined, a human must manually go through the differences to ensure any changes are intended.

Types of integration tests

Functional tests

Functional tests evaluate how the SUT behaves, much like a unit test examines a specific piece of functionality. However, functional tests often interact with a binary differently than with the individual classes inside that binary, and they require a separate SUT setup.

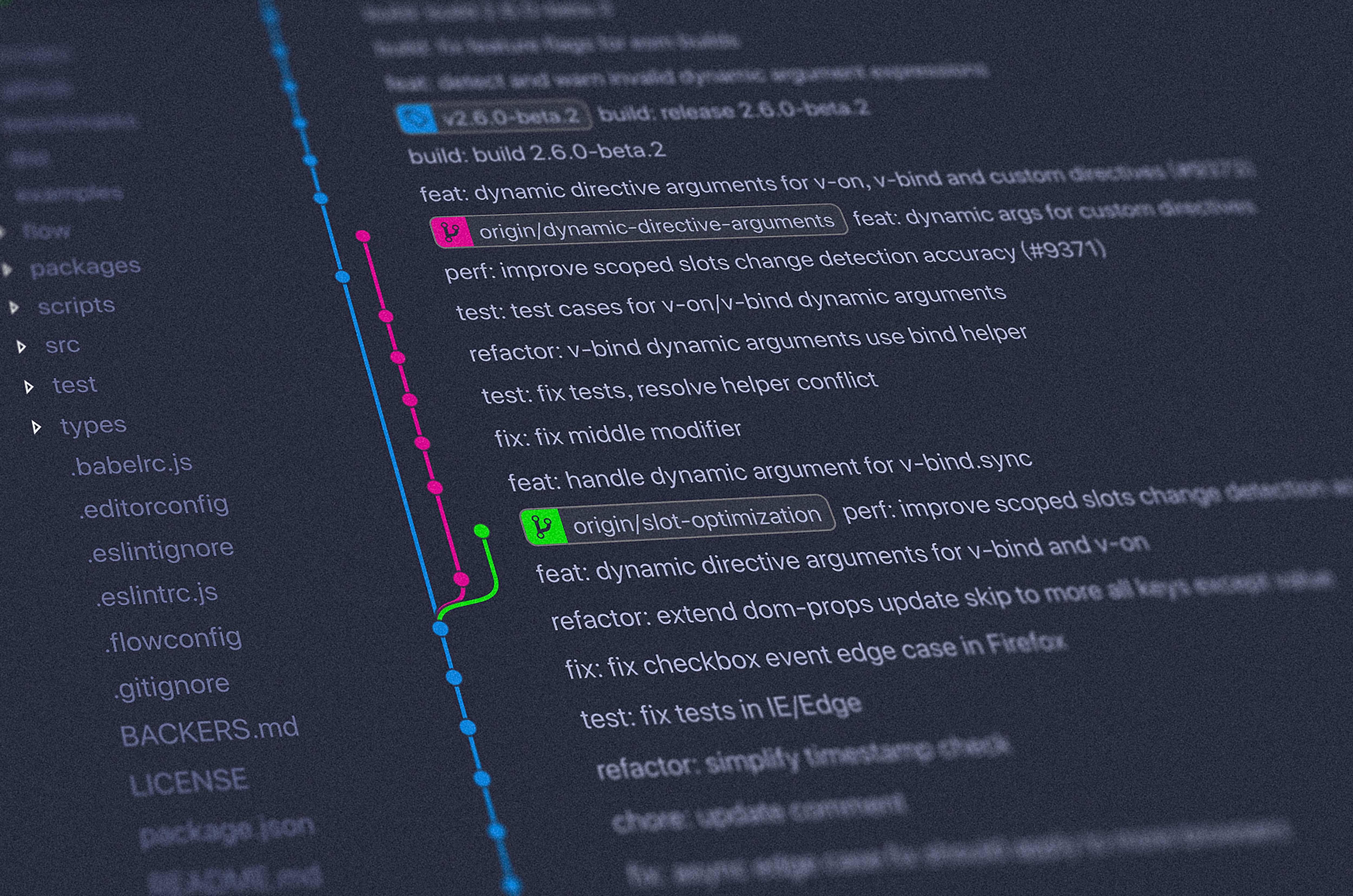

Diff Tests (Differential tests)

Differential tests typically involve comparing two or more versions of the SUT by giving them the same input and observing differences in their behaviors. This approach is handy when you want to compare a new version of a feature or product to an older one, like during a migration.

Performance tests

Performance tests focus on measuring changes in performance by analyzing factors like time, disk space, memory usage, and more. These tests involve running multiple SUTs and observing the differences in their performance.

When to use

I would recommend starting with an integration test. An integration test is likely very similar to “run it and see how it works”, but you capture those steps programatically. Additionally, they keep you focused on the end-user experience for your program. While it doesn’t replace unit tests, it does provide a better holistic view of the behavior of your program. Integration testing is a trusty companion when you want to ensure that all the parts of your software work harmoniously, especially in user-facing scenarios, diverse environments, and during version changes or migrations. Since so much software brings in so many external dependencies, it’s a valuable tool in your arsenal for building robust and reliable software.